Uncompressed video in the cloud is an answer to the dreams that many people are yet to have, but the early adopters of cloud workflows, those that are really embedding the cloud into their production and playout efforts are already asking for it. AWS have developed a way of delivering this between computers within their infrastructure and have invited a vendor to explain how they are able to get this high-bandwidth content in and out.

On The Broadcast Knowledge we don’t normally feature such vendor-specific talks, but AWS is usually the sole exception to the rule as what’s done in AWS is typically highly informative to many other cloud providers. In this case, AWS is first to the market with an in-prem, high-bitrate video transfer technology which is in itself highly interesting.

LTN’s Alan Young is first to speak, telling us about the traditional broadcast workflows of broadcasters giving the example of a stadium working into the broadcaster’s building which then sends out the transmission feeds by satellite or dedicated links to the transmission and streaming systems which are often located elsewhere. LTN feel this robs the broadcaster of flexibility and cost savings from lower-cost internet links. The hybrid that he sees working in medium-term is feeding the cloud directly from the broadcaster. This allows production workflows to take place in the cloud. After this has happened, the video can either come back to the broadcaster before on-pass to transmission or go directly to one or more of the transmission systems. Alan’s view is the interconnecting network between the broadcaster and the cloud needs to be reliable, high quality, low-latency and able to handle any bandwidth of signal – even uncompressed.

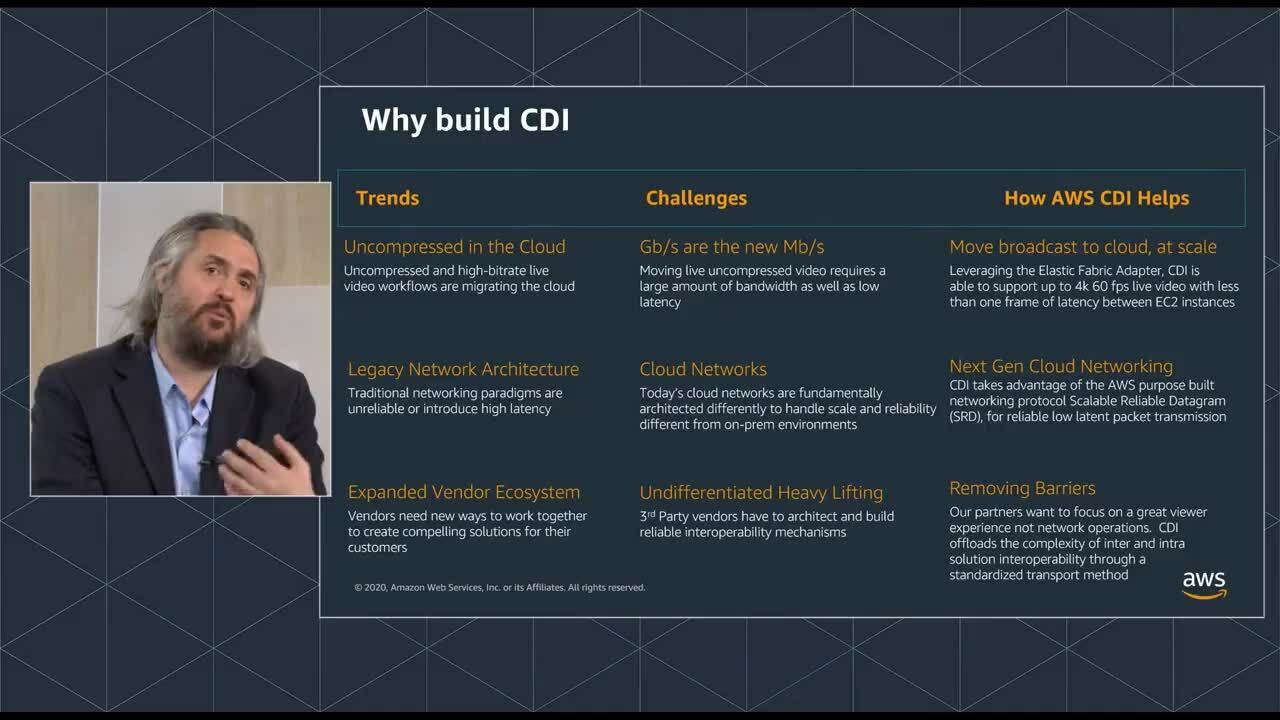

Once in the cloud, AWS Cloud Digital Interface (CDI) is what allows video to travel reliably from one computer to another. Andy Kane explains what the drivers were to create this product. With the mantra that ‘gigabits are the new megabits’, they looked at how they could move high-bandwidth signals around AWS reliably with the aim of abstracting the difficulty of infrastructure away from the workflow. The driver for uncompressed in the cloud is reducing re-encoding stages since each of them hits latency hard and, for professional workflows, we’re trying to keep latency as close to zero as possible. By creating a default interface, the hope is that inter-vendor working through a CDI interface will help interoperability. LTN estimate their network latency to be around 200ms which is already a fifth of a second, so much more latency on top of that is going to creep up to a second quite easily.

David Griggs explains some of the technical detail of CDI. For instance, it has the ability to send data of any format be that raw packetised video, audio, ancillary data or compressed data using UDP, multicast between EC2 instances within a placement group. With a target latency of less than one frame, it’s been tested up to UHD 60fps and is based on the Elastic Fabric Adapter which is a free option for EC2 instances and uses kernel bypass techniques to speed up and better control network transfers. CPU use scales linearly so where 1080p60 takes 20% of a CPU, UHD would take 80%. Each stream is expected to have its own CPU.

The video ends with Alan looking at the future where all broadcast functionality can be done in the cloud. For him, it’s an all-virtual future powered by the increasingly accessible high-bandwidth internet connectivity coming in a less than the cost of bespoke, direct links. David Griggs adds that this is changing the financing model moving from a continuing effort to maximise utilisation of purchased assets, to a pay as you go model using just the tools you need for each production.

Watch now!

Download the slides

Please note, if you follow the direct link the video featured in this article is the seventh on the linked page.

Speakers

|

David Griggs Senior Product Manager, AWS |

|

Andy Kane Principal Business Development Manager, AWS |

|

Alan Young CTO and Head of Strategy, LTN Global |