Getting video over the internet and around the cloud has well-established solutions, but not only are they continuing to evolve, they are still new to some. This video looks at workflows that are possible teaming up SRT, RIST and NDI by getting a glimpse into projects that have gone live in 2020. We also get a deeper look at RIST’s features with a Q&A.

This video from SMPTE’s New York section starts with Bryan Nelson from Alpha Video who’s been involved in many cloud-based NDI projects many of which also use SRT to get in and out of the cloud. NDI’s a lightly compressed, low-delay codec suitable for production and works well on 1GbE networks. Not dependant on multicast, it’s a technology that lends itself to cloud-based production where it’s found many uses. Bryan looks at a number of workflows that are also enabled by the Sienna production system which can use many video formats including NDI.

For more information on SRT and RIST, have a look at this SMPTE video outlining how they work and the differences. For a deeper dive into NDI, this SMPTE webinar with VizRT explains how its works and also gives demos of the same software that Bryan uses. To get a feel for how NDI fits in with live production compared to SMPTE’s uncompressed ST 2110, this IBC Panel discussion ‘Where can SMPTE ST 2110 and NDI Co-exist’? explores the topic further.

Bryan’s first example is the 2020 NFL draft is first up which used remote contribution on iPhones streaming using SRT. All streams were aggregated in AWS and converted to NDI feeding NDI multiviewers and routed. These were passed down to on-prem NDI processors which used HP ProLiant servers to output as SDI for handoff to other broadcast workflows. The router could be controlled by soft panels but also hardware panels on-prem. Bryan explores an extension to this idea where multiple cloud domains can be used, with NDI being the handoff between them. In one cloud system, VizRT vision mixing and graphics can be added with multiviewers and other outputs being sent via SRT to remote directors, producers etc. Another cloud system could be controlled by a third party with other processing ahead of then being sent to side and being decoded to SDI on-prem. This can be totally separate to acquisition from SDI & NDI with cameras located elsewhere. SRT & NDI become the mediators between this decentralised production environment.

Bryan finishes off by talking about remote NLE monitoring and various types of MCR monitoring. NLE editing is made easy through NDI integration within Adobe Premiere and Avid Media Composer. It’s possible to bring all of these into a processing engine and move them over the public internet for viewing elsewhere via Apple TV or otherwise.

Ciro Noronha from Cobalt Digital takes the last half of the video to talk about RIST. In addition to the talks mentioned above, Ciro recently gave a talk exploring the many RIST use cases. A good written overview of RIST can be found here.

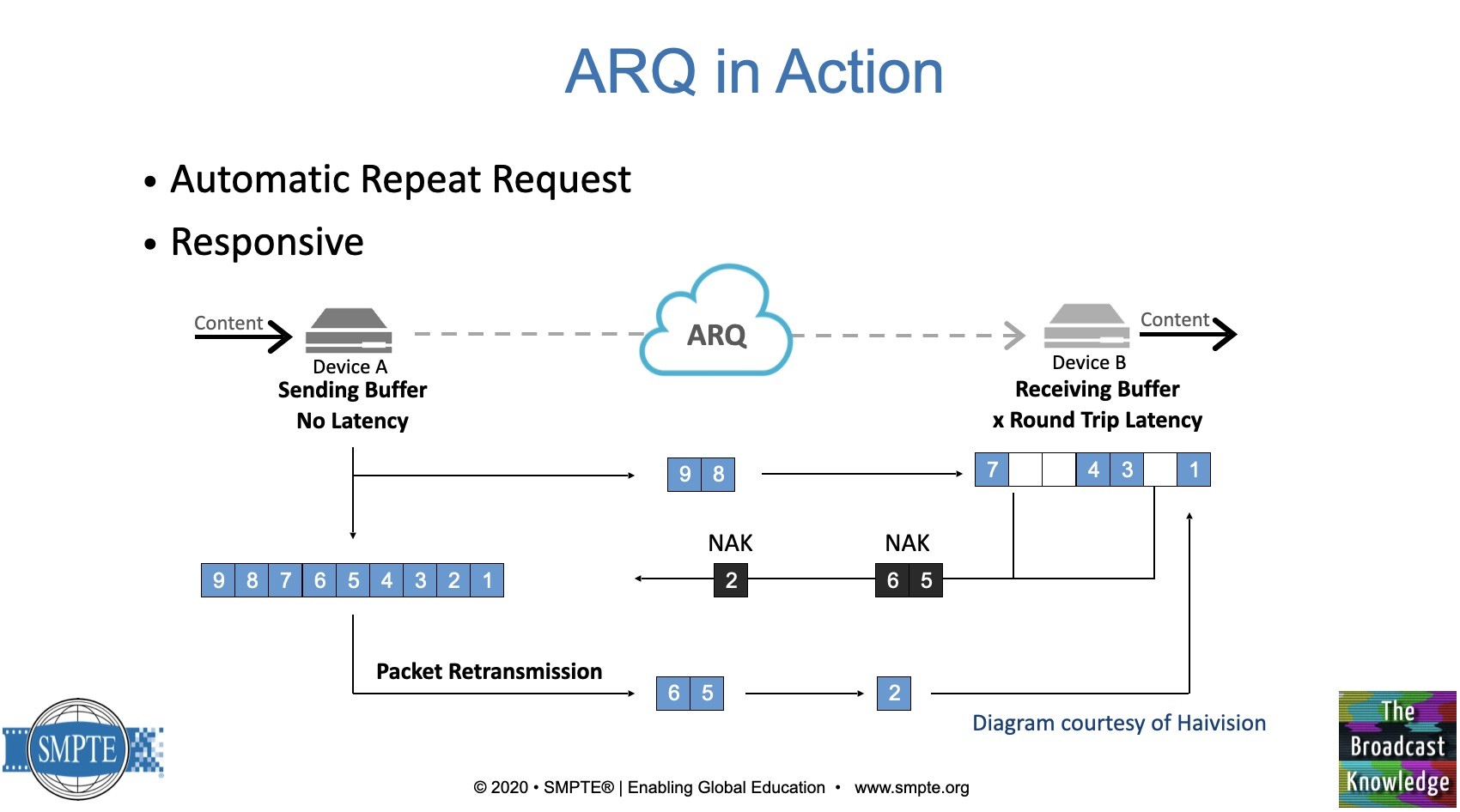

Ciro looks at the two published profiles that form RIST, the simple and main profile. The simple profile defines RTP interoperability with error correction, using re-requested packets with the option of bonding links. Ciro covers its use of RTCP for maintaining the channel and handling the negative acknowledgements (NACKs) which are based on RFC 4585. RIST can bond multiple links or use 2022-7 seamless switching.

The Main profile builds on the simple profile by adding encryption, authentication and tunnelling. Tunnels allow multiple flows down one connection which simplifies firewall configuration, encryption and allows either end to initiate the bi-directional link. The tunnel can also carry non-RIST traffic for any other purpose. The tunnels are FRE over UDP (RFC 8086). DTLS is used for encryption which is almost identical to TLS used to secure websites. DTLS uses certificates meaning you get to authenticate the other end, not just encrypt the data. Alternatively, you can send a password that avoids the need for certificates when that’s not needed or for one-to-many distribution. Ciro concludes by showing that it can work with up to 50% packet loss and answers many questions in the Q&A.

Watch now!

Speakers

|

Bryan Nelson Sales Account Executive, Alpha Video |

|

Ciro Noronha President, RIST Forum Executive Vice President of Engineering, Cobalt Digital |