Is using uncompressed video in the cloud with just 6 frames of latency to get there and back ready for production? WebRTC manages sub-second streaming in one direction and can even deliver AV1 in real-time. The key to getting down to a 100ms round trip is to move down to millisecond encoding and to use uncompressed video in the cloud. This video shows how it can be done.

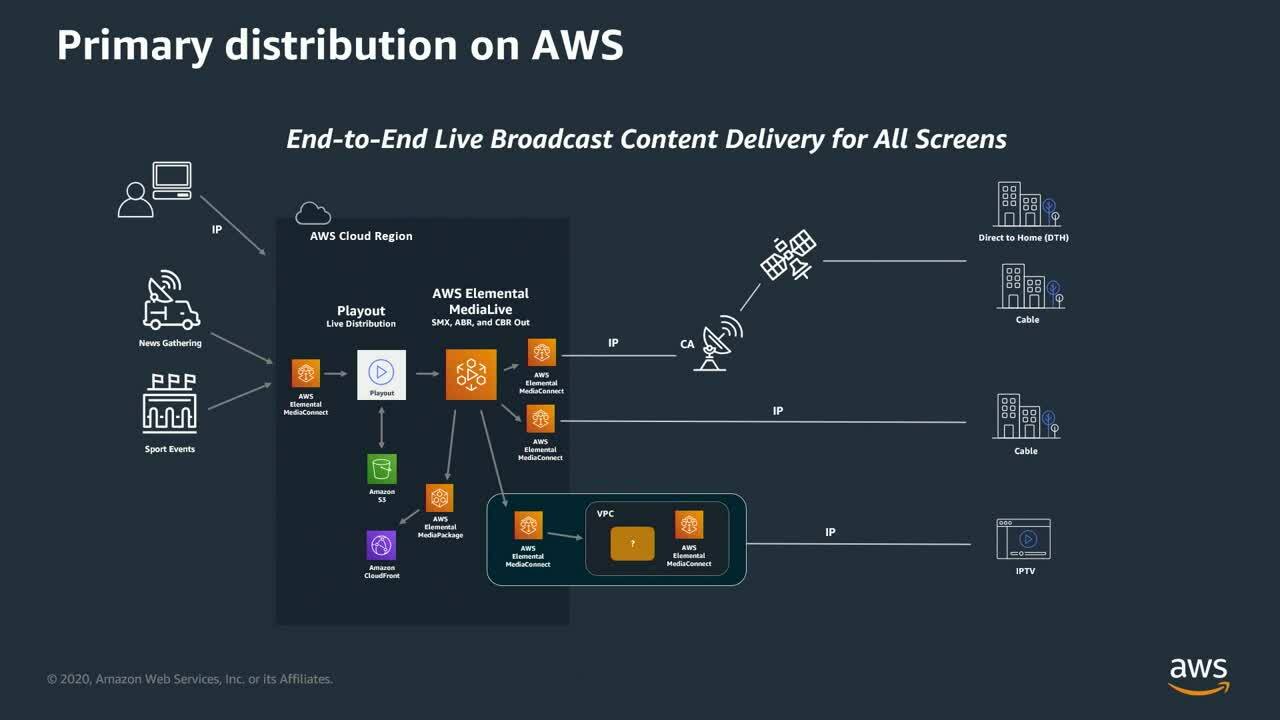

Fox has a clear direction to move into the cloud and last year joined AWS to explains how they’ve put their delivery distribution into the cloud remuxing feeds for ATSC transmitters, satellite uplinks, cable headends and encoding for internet delivery, In this video, Fox’s Joel Williams, Evan Statton from AWS explain their work together making this a reality. Joel explains that latency is not a very hot topic for distribution as there are many distribution delays. The focus has been on getting the contribution feeds into playout and MCR monitoring quickly. After all, when people are counting down to an ad break, it needs to roll exactly on zero.

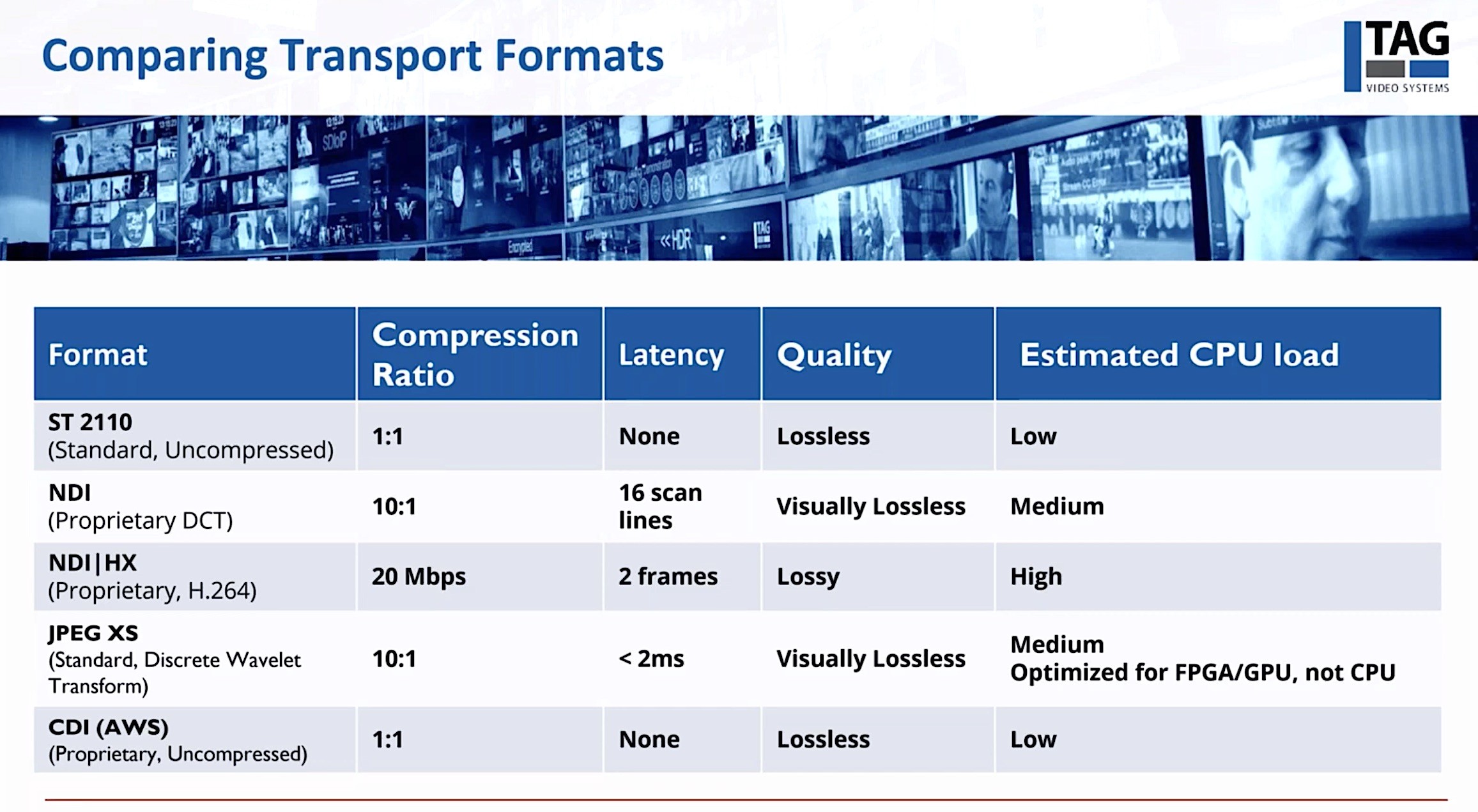

Evan explains the approach AWS has taken to solving this latency problem and starts with considering using SMPTE’s ST 2110 in the cloud. ST 2110 has video flows of at least 1 Gbps, typically and when implemented on-premise is typically built on a dedicated network with very strict timing. Cloud datacentres aren’t like that and Evan demonstrates this showing how across 8 video streams, there are video drops of several seconds which is clearly not acceptable. Amazon, however, has a product called ‘Scalable Reliable Datagram’ which is aimed at moving high bitrate data through their cloud. Using a very small retransmission buffer, it’s able to use multiple paths across the network to deliver uncompressed video in real-time. The retransmission buffer here being very small enables just enough healing to redeliver missing packets within the 16.7ms it takes to deliver a frame of 60fps video.

On top of SRD, AWS have introduced CDI, the Cloud Digital Interface, which is able to describe uncompressed video flows in a way already familiar to software developers. This ‘Audio Video Metadata’ layer handles flows in the same way as 2110, for instance keeping essences separate. Evan says this has helped vendors react favourably to this new technology. For them instead of using UDP, SRD can be used with CDI giving them not only normal video data structures but since SRD is implemented in the Nitro network card, packet processing is hidden from the application itself.

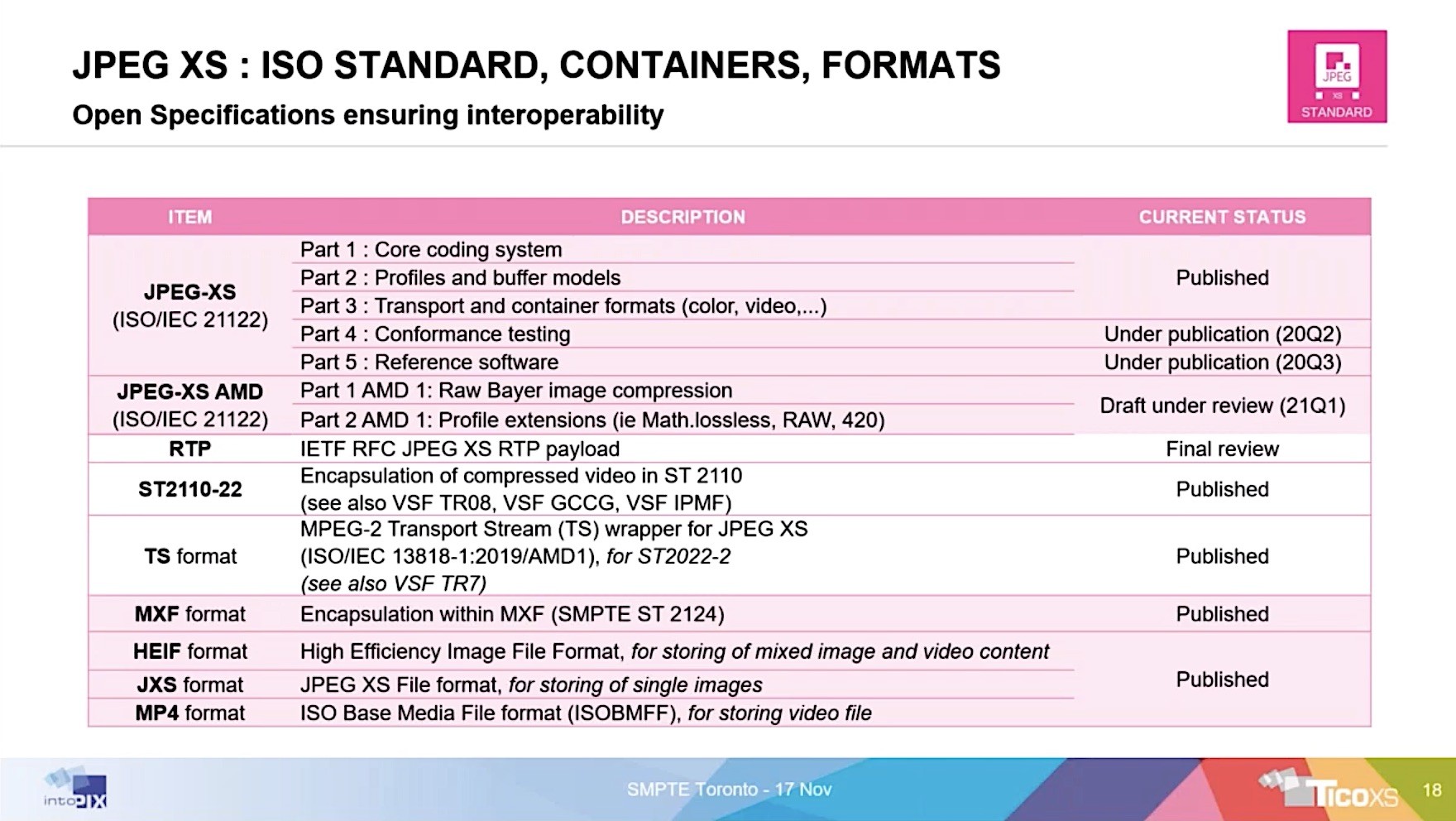

The final piece to the puzzle is keeping the journey into and out of the cloud low-latency. This is done using JPEG XS which has an encoding time of a few milliseconds. Rather than using RIST, for instance, to protect this on the way into the cloud, Fox is testing using ST 2022-7. 2022-7 takes in two identical streams on two network interfaces, typically. This way it should end up with two copies of each packet. Where one gets lost, there is still another available. This gives path redundancy which a single stream will never be able to offer. Overall, the test with Fox’s Arizona-based Technology Center is shown in the video to have only 6 frames of latency for the return trip. Assuming they used a California-based AWS data centre, the ping time may have been as low as two frames. This leaves four frames for 2022-7 buffers, XS encoding and uncompressed processing in the cloud.

Watch now!

Speakers

|

Joel Wiliams VP of Architecutre & Engineering, Fox Corporation |

|

Evan Statton Principal Architect, Media & Entertainment, AWS |