Remote production is in heightened demand at the moment, but the trend has been ongoing for several years. For each small advance in technology, it becomes practical for another event to go remote. Remote production solutions have to be extremely flexible as a remote production workflow for one copmany won’t work for the next This is why the move to remote has been gradual over the last decade.

In this video, Dirk Skyora from Lawo gives three examples of remote production projects stretching back as far as 2016 to the present day in this RAVENNA webinar with evangelist Andreas Hildebrand.

The first case study is remote production for Belgian second division football. Working with Belgian telco Proximus along with Videohouse & NEP, LAWO setup remote production for stadia kitted out with 6 cameras and 2 commentary positions. With only 1 gigabit connectivity to the stadiums, they opted to use JPEG 2000 encoding at 100 Mbps for both the cameras out of the stadia but also the two return feeds back in for the commentators.

The project called for two simultaneous matches feeding into an existing gallery/PCR. Deployment was swift with flightcases deployed remotely and a double set of equipment being installed into the fixed PCR. Overall latency was around 2.5 frames one-way, so the camera viewfinders were about 5 frames adrift once transport and en/decoding delay were accounted for.

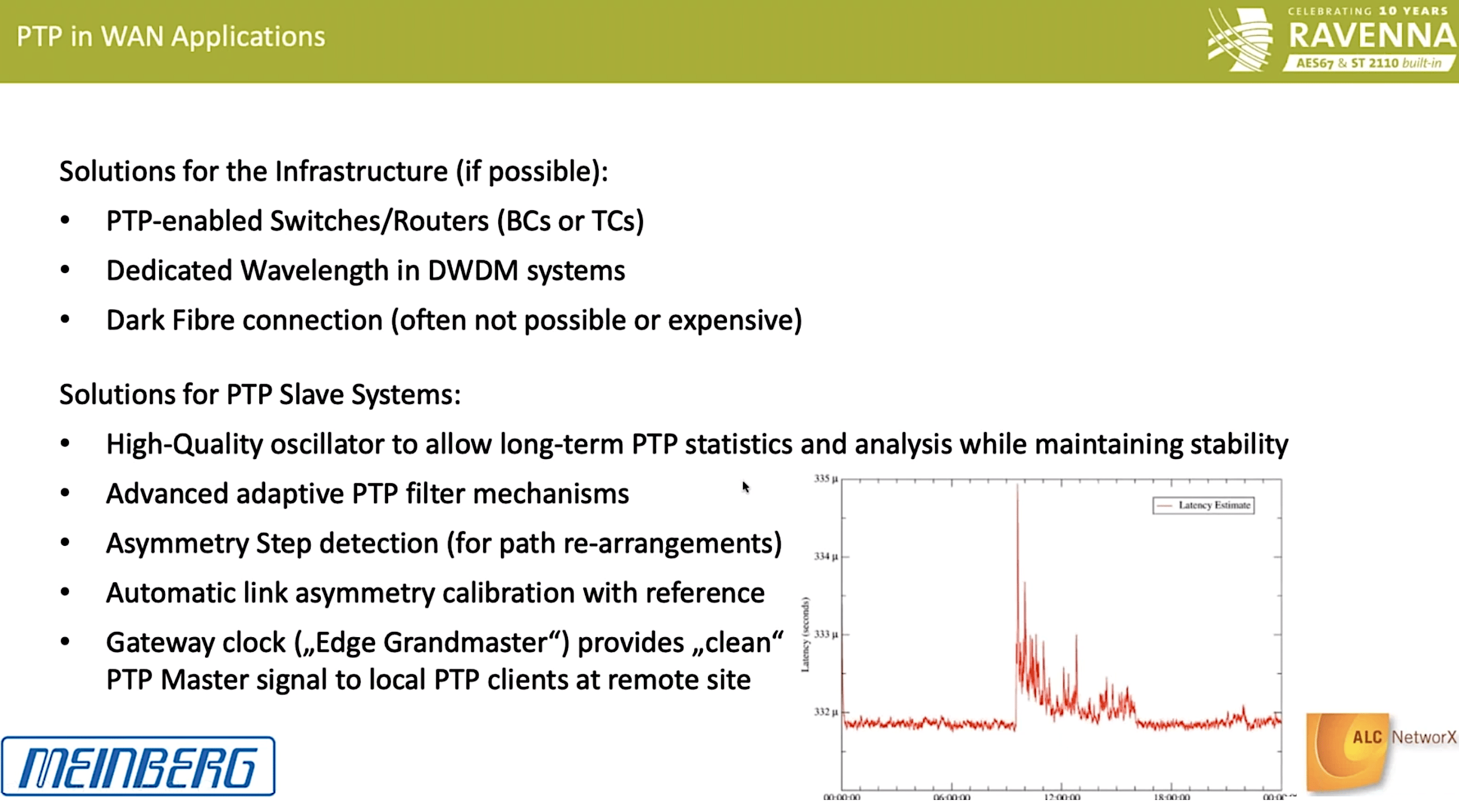

The main challenges were with the MPLS network into the stadia which would spontanously reroute and be loaded with unrelated traffic at around 21:00. Although there was packet loss, none was noticable on the 100Mbps J2K feeds. Latency for the commentators was a problem so some local mixing was needed and lastly PTP wasn’t possible over the network. Timing was, therefore, derived from the return video feed into the stadium which had come from the PTP-locked gallery. Locally this incoming timing was used to lock a locally generated PTP signal.

The next case study is inter-country links for the European Council connecting the Luxembourg and Brussels buildings for the European Council. The project was to move all production to a single tech control room in Brussells and relied on two 10GbE links between the buildings going through an Arista 7280 carrying 18 videos in one direction and two in return. Although initially reluctant to compress, the Council realised after testing that VC2 which offers around 4x compression would work well and deliver no noticable latency (approx 20ms end to end). Thanks to using VC2, the 10Gig links saw low usage from the project and the Council were able to migrate other business activities onto the link. PTP was generated in Brussels and Luxembourg re-generated their PTP from the Brussels signal, to be distributed locally. Overall latency was 1 frame.

Lastly, Dirk outlines the work done for the Belgium Daily News which had been bought out by DPG Media. This buy-out prompted a move from Brussels to Antwerp where a new building opened. However, all of the techinical equipmennt was already in Brussels. This led to the decision to remote control everything in Brussels from Antwerp. The production staff moved to Antwerp, causing some issues with the disconnect between production and technical, but also due to personnel relocating and getting used to new facilities.

The two locations were connected with a 400GbE, redundant infrastructure using IP<->SDI gateways. Latency was 1 frame and, again, PTP on one site was created from the incoming PTP from the other.

The video finishes with a detailed Q&A.

Watch now!

Speakers

|

Dirk Sykora Technical Sales Manager, Lawo |

|

Andreas Hildebrand RAVENNA Evangelist, ALC NetworX |