AV1 seems to be shaking off its reputation for slow encoding, now only 2x slower than HEVC. How practical, then is it to put AV1 into a real-time codec aiming for sub-second latency? This is exactly what the Alliance for Open Media are working on as parts of AV1 are perfectly suited for the use case.

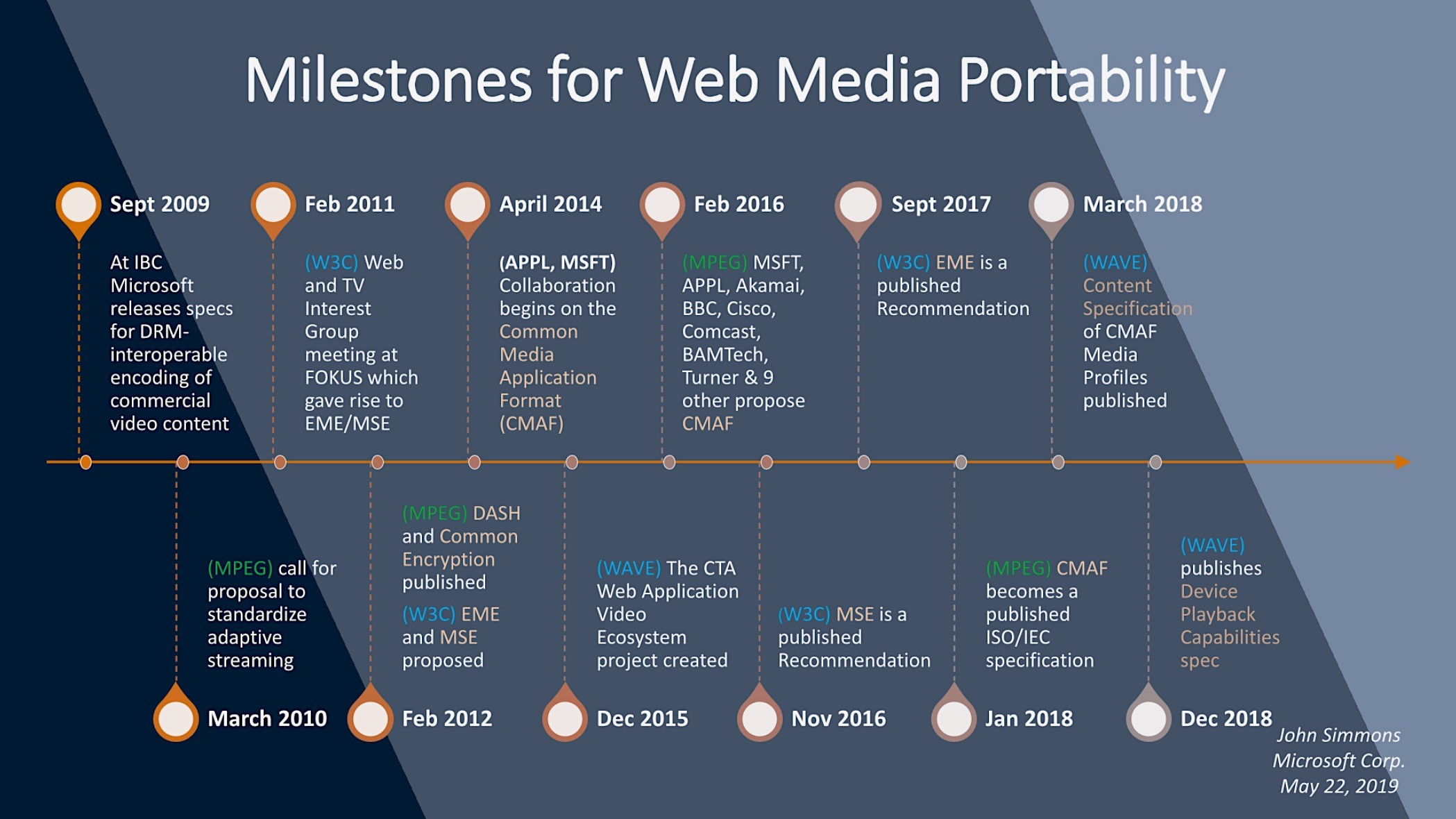

Dr Alex from CoSMo Software took the podium at the Alliance for Open Media Research Symposium to lay out the whys and wherefores of updating WebRTC to deliver AV1. He started by outlining the different requirements of real-time vs VoD. With non-live content, encoding time is often unrestricted allowing for complex encoding methods to achieve lower bitrates. Even live CMAF streams aiming to achieve a relatively low 3-second latency have time enough for much more complex encoding than real-time. Encoding, ingest, storage and delivery can all be separated into different parts of the workflow for VoD, whereas real-time is forced to collapse logical blocks down as much as possible. Unsurprisingly, Dr Alex outlines latency as the most important driver in the WebRTC use case.

When streaming, ABR isn’t quite as simple as with chunked formats. The different bit rate streams need to be generated at the encoder to save any transcoding delays. There are two ways of delivering these streams. One is to deliver them as separate streams, the other is to deliver only one, layered stream. The latter method is known as Scalable Video Coding (SVC) which sends a base layer of a low-resolution version of the video which can be decoded on its own. Within that stream, is also the information which builds on top of that video to create a higher-resolution version of the same stream. You can have multiple layers and hence provide information for 3, 4 or more streams.

Managing which streams get to the decoder is done through an SFU (Selective Forwarding Unit) which is a server to which WebRTC clients connect to receive just the stream, or parts of a stream, they need for their current bandwidth capability. It’s important to remember that compared to video conferencing solutions based on WebRTC, that streaming using WebRTC scales linearly. Whilst it’s difficult to hold a meeting with 50 people in a room, it’s possible to optimise what video is sent to everyone by only showing the last 5 speakers in full resolution, the others as thumbnails. Such optimisations are not available for video distribution, rather SFUs and media servers need to be scaled and cascaded. This should be simple, but testing can be difficult but it’s necessary to ensure quality and network resilience at scale.

Cisco have already demonstrated the first real-time AV1-based WebRTC system, though without SVC support. Work is ongoing to deliver improvements to RTP encapsulation of AV1 in WebRTC. For instance, providing Decoding Target Information which embeds information about frames without needing to decode the video itself. This information explains how important each frame is and how it relates to the other video. Such metadata can be used by the SFU or the decoder to understand which frames to drop and send/decode.

Watch now!

Download the slides

Speaker

|

Dr Alex Gouaillard Video Codec Working Group – Real-time subgroup, Allience for Open Media Founder, Directory & CEO, CoSMo Software Consulting Pte. Ltd. Co-founder & CTO, Millicast |